We normally imagine artificial intelligence living mostly behind screens, quietly optimizing feeds, predicting clicks, and generating content on demand. But a new wave of designers is asking a more interesting question: what happens when AI steps into the physical world and becomes something you touch, smell, hold, or play with? The most compelling answers are not about speed or scale, but about meaning. From memory and reflection to music-making, these projects show how AI-powered devices can be genuinely useful by deepening human experience rather than replacing it.

Anemoia Device by MIT Media Lab

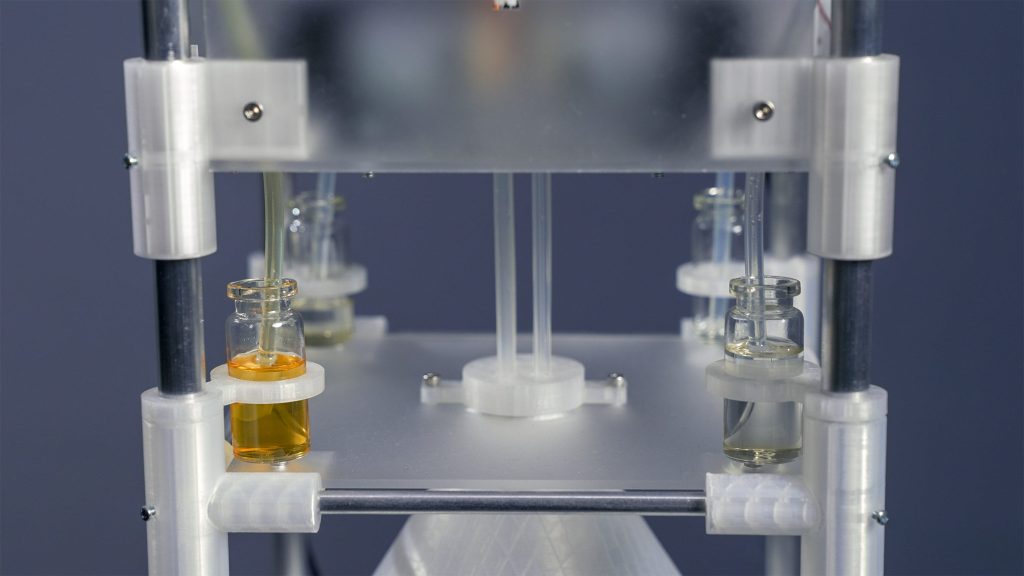

At the MIT Media Lab, researcher Cyrus Clarke has built a prototype that treats memory like a material that can be distilled. The Anemoia Device translates photographs into custom fragrances, using generative AI to interpret visual content and transform it into scent. The machine is structured as a vertical stack: an analogue photograph goes in at the top, an AI-powered computer in the middle analyzes the image, and a set of fragrance pumps at the bottom blends a bespoke aroma.

Anemoia Device by MIT Media Lab

After the image is scanned, three physical dials allow people to shape the AI’s interpretation. Users can choose a point of view within the photo, such as a person or an object, define its stage of life or use, and assign an emotional tone. These choices guide the system away from a single literal reading and toward something more personal, even poetic.

Anemoia Device by MIT Media Lab

Clarke frames the project around the concept of anemoia, a form of nostalgia for a time one has never lived through. While the machine can work with any photograph, he is particularly interested in inherited or archival images. Family photos, old recipes, and forgotten moments become raw material for scents that feel familiar without being tied to direct experience. In one trial, a calm, fruit-centered prompt led to a fragrance that evoked autumn, complete with spiced apple and earthy musk.

Anemoia Device by MIT Media Lab

Under the hood, the system relies on a vision-language model and a library of 50 base scents, ranging from pine forest to old books. Each fragrance is released in one-second increments, allowing for a surprising range of combinations. More importantly, the narrative built through the dials helps the AI avoid clichés. Clarke sees this as part of a broader ambition to make memories tangible again, countering the way they are currently stored as files and feeds. His future visions include a desktop version for homes and a remote service, both aimed at turning technology into something that encourages pause, presence, and sensory awareness.

Cognitive Bloom by Map Project Office and Chanwoo Lee

While Anemoia focuses on memory, Cognitive Bloom turns AI toward introspection. Developed by industrial design studio Map Project Office with Royal College of Art graduate Chanwoo Lee, the concept imagines AI as a companion for slow, deliberate thinking rather than instant answers. The device lives in the home and invites users into daily rituals of reflection that gradually grow a virtual garden.

Cognitive Bloom by Map Project Office and Chanwoo Lee

Cognitive Bloom consists of two nested elements: the Pond and the Garden. The Pond is a small, palm-sized object that users pick up when they want a moment of quiet thought. Once activated, it presents a “wordstream,” a sequence of single words that form reflective prompts. Interaction is intentional and tactile. Users press and hold the bezel, tilt the device to choose a mode of reflection, and then follow the words as they appear at an adjustable pace.

Cognitive Bloom by Map Project Office and Chanwoo Lee

The choice to display text one word at a time is central to the design. Inspired by speed-reading software, this format demands focus and mirrors the flow of consciousness. The AI speaks in the first person, asking questions like “How did I feel about…?” rather than offering advice. According to Map’s designers, the goal is to broaden perspective gently, without flattering the user or reinforcing existing biases.

Cognitive Bloom by Map Project Office and Chanwoo Lee

Over time, consistent use causes plants to grow on the Garden module, a visual reward that reflects commitment rather than productivity. Conversations are stored locally on the device, not in the cloud, reinforcing a sense of privacy and trust. For Lee, the project builds on earlier experiments with reflective AI and underscores the power of hardware to shape behavior.

MUSE by Hyeyoung Shin and Dayoung Chang (also header image)

Where many AI music tools begin with typing prompts into a laptop, MUSE by Korean designers Hyeyoung Shin and Dayoung Chang starts with playing. Designed as a modular system for band musicians, MUSE treats AI as a responsive collaborator rather than a generator that waits for instructions. Instead of one device, it is a family of modules dedicated to vocals, drums, bass, synthesizer, and guitar, each designed to listen and respond in real time.

MUSE by Hyeyoung Shin and Dayoung Chang

The workflow mirrors a rehearsal rather than a software session. A drummer taps patterns into the drum module, a singer hums into the vocal unit, and a guitarist strums ideas into their module. The AI picks up on timing, touch, and phrasing, then fills out parts with suggestions that align with what has just been played. The result feels less like a backing track and more like a bandmate offering ideas.

MUSE by Hyeyoung Shin and Dayoung Chang

Physicality is a core part of the concept. The modules are small, colorful, and designed to live around the home rather than in a dedicated studio. A vocal unit can sit by the bed for late-night ideas, while a drum block might live on the coffee table for casual jams. Their textures and forms are matched to their roles, making them feel like approachable objects rather than intimidating gear.

MUSE by Hyeyoung Shin and Dayoung Chang

MUSE ultimately asks how musicians can work with AI without losing their identity. By keeping the body, rhythm, and interaction at the center, it positions AI as something that learns a player’s groove over time. It is a subtle but important shift, one that suggests the future of creative tools may depend less on smarter algorithms and more on how thoughtfully they listen.

Together, these projects point to a future where AI-powered devices earn their place in daily life by being useful in deeply human ways. They remind us that innovation is not just about what technology can do, but about how it makes us feel when we use it.